X Space #13 — AGI vs LLM: Why Bigger Models Won’t Get Us to Artificial General Intelligence. Watch the full recording on YouTube ↗ · Listen on X ↗

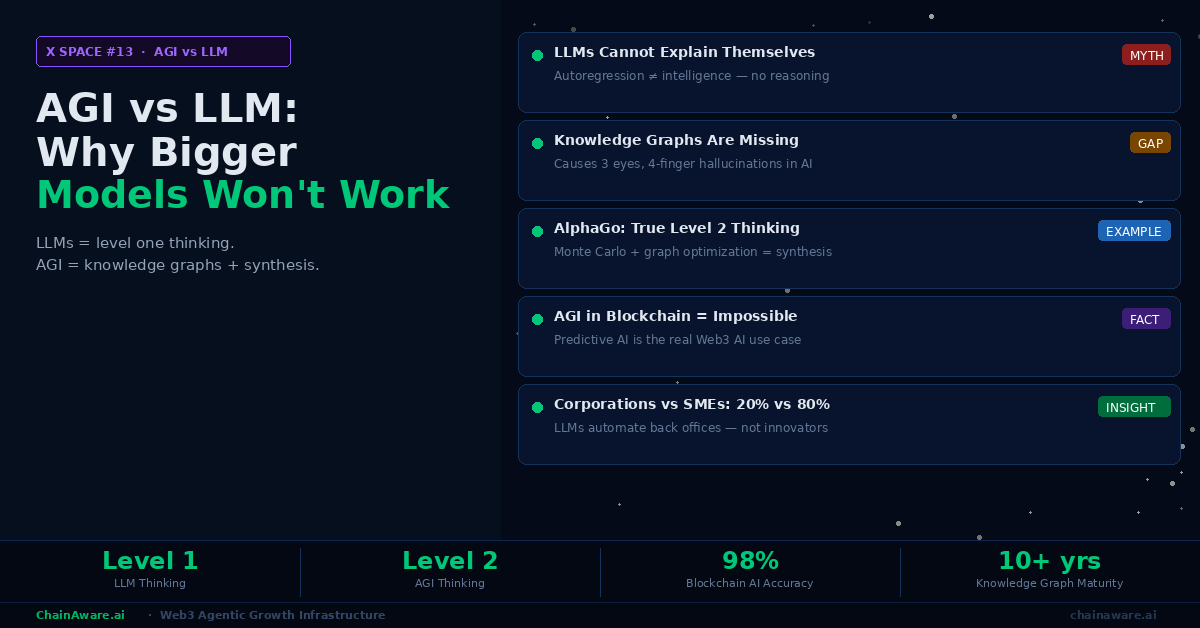

X Space #13 takes a different direction from ChainAware’s usual AdTech and user acquisition focus. Instead, co-founders Martin and Tarmo spend 65 minutes dismantling one of the most pervasive misconceptions in technology: that large language models are a path to artificial general intelligence, and that building bigger models with more compute will eventually produce true AI. The session is a precise technical and economic analysis — covering why LLMs are fundamentally incapable of reasoning, what the three actual components of AGI are, why all those components already exist in production-ready form, and why the entire industry narrative around LLMs serves corporate interests rather than genuine intelligence research. The session also covers what all of this means for Web3 specifically — and why blockchain-based “AGI projects” are what Tarmo calls, bluntly, gambling.

In This Article

- What an LLM Actually Is: Autoregression, Not Intelligence

- The Alice Puzzle: Why LLMs Fail Basic Logic

- Level One Thinking: Why LLMs Cannot Explain Themselves

- What AGI Actually Requires: Three Components Already Available

- Knowledge Graphs: The Missing Link That Causes Six Fingers

- Hierarchical Forward Planning: How Kasparov Thinks 12 Moves Ahead

- Level Two Thinking: Synthesis Across Search Spaces

- AlphaGo as Proof: AGI Components Work When Combined

- The Llama 8B Experiment: Small Model + Graph Beats Large LLM

- Who Benefits from the LLM Narrative: Corporations, Not AGI

- The SME Problem: 80% of the Economy That LLMs Cannot Help

- AGI on Blockchain: Why It Is Impossible

- Real AI in Web3: Predictive Models, Not LLM Wrappers

- Specialized AGIs: The Actual Future of Artificial Intelligence

- The DeFi Narrative Parallel: When VCs Push the Wrong Path

- Comparison Tables

- FAQ

What an LLM Actually Is: Autoregression, Not Intelligence

Tarmo opens the technical discussion with a precise definition of what large language models actually do — stripped of the marketing language and the capability demonstrations that obscure the underlying mechanism. The definition is simple and, once stated clearly, immediately reveals why LLMs cannot serve as a foundation for artificial general intelligence.

An LLM is an autoregressive model. Given a sequence of words (the prompt), it predicts the most statistically probable next word. Then, treating the original prompt plus that predicted word as the new sequence, it predicts the next most probable word again. This process repeats until the model generates a complete response. As Tarmo explains: “What are large language models? They just predict you the next probable word. It is just a prediction of the next probable word. And what happens is we think it is a kind of intelligence. No, it is not intelligence. It is something, but you just add words after words afterwards. These are LLMs.”

The Training Data Foundation

The model’s ability to produce coherent, knowledgeable-sounding responses comes from the training process, not from any reasoning capability. LLMs train on enormous quantities of text — essentially all public human writing available on the internet — and learn statistical associations between word sequences across this corpus. When a user asks about quantum physics, the model produces physics-sounding text because the training data contained enormous quantities of physics writing, and the model learned which word sequences follow which physics prompts. However, this is pattern reproduction, not understanding. The model has no representation of what quantum mechanics means, no model of the physical world, and no ability to reason about whether its output is correct. It produces statistically likely text about the topic. For more on the distinction between predictive AI (which ChainAware uses) and LLMs, see our predictive AI guide.

The Alice Puzzle: Why LLMs Fail Basic Logic

Martin introduces a specific puzzle that elegantly demonstrates the reasoning failure that defines LLMs. The puzzle is simple and the answer is immediately obvious to any human who thinks about it for a moment. However, LLMs consistently fail it — and their failure reveals precisely what is missing from their architecture.

The puzzle: “If Alice has four brothers and two sisters, how many sisters do Alice’s brothers have?” The answer is three — Alice herself is a sister to her brothers, so her brothers have three sisters in total (the two sisters Alice mentioned plus Alice). Any human who pauses to model the family structure arrives at this instantly. LLMs, by contrast, typically answer two — because the training data overwhelmingly pairs “Alice’s sisters” questions with the number of sisters Alice has, and the model reproduces this statistical pattern without constructing a representation of the actual family relationships involved.

Why the Failure Is Revealing

This failure is not a quirk that better training will fix — it is a structural consequence of how autoregression works. Solving the Alice puzzle requires building a mental model of the family structure, reasoning about Alice’s role within that structure (she is simultaneously a sibling and a sister to her brothers), and then applying that model to answer the question. No amount of statistical pattern matching over word sequences achieves this. The model does not “understand” family relationships, cannot construct a situational model, and therefore cannot reason about novel configurations of information it has processed statistically. As Tarmo notes: “If people tell you that LLM is intelligence and leads us to AGI, then there is probably some critical bit of reflection missing.” For more on how ChainAware’s predictive AI differs — using specific trained models with verifiable accuracy — see our behavioral analytics guide.

Real Predictive AI — Not LLM Guesswork

ChainAware Fraud Detector — 98% Accuracy, Verifiable, Real-Time

Unlike LLMs that cannot explain their outputs, ChainAware’s fraud detection models are trained on specific blockchain behavioral patterns, backtested against CryptoScamDB, and deliver verifiable 98% accuracy. No hallucinations. No autoregression. Predictive AI that can tell you exactly why a wallet is flagged. Free for individual checks.

Level One Thinking: Why LLMs Cannot Explain Themselves

Tarmo introduces a conceptual framework that structures the entire session’s analysis: the distinction between level one and level two thinking. This distinction is not a new concept in cognitive science — Daniel Kahneman’s Thinking, Fast and Slow covers similar territory as System 1 and System 2 thinking — but Tarmo applies it specifically to characterise what LLMs do and what they cannot do.

Level one thinking is what Tarmo calls subconsciousness-driven response: you encounter a stimulus and produce an answer from ingrained patterns without being able to explain why you answered that way. A native speaker instantly knows that “the cat sat on the mat” is grammatically correct without consciously applying grammar rules. Level one thinking is fast, automatic, and inarticulate — it produces answers but not explanations. As Tarmo explains: “Level one thinking is you have something in your subconsciousness programmed, whoever programmed it. And you just respond question. You don’t know why. You just respond something. You have given an answer. You cannot explain why you answered this question like this.”

LLMs Are Entirely Level One

LLMs operate entirely within level one thinking. They produce responses from pattern matching against training data but cannot explain the reasoning behind any output. If asked “why did you answer like that?”, an LLM either generates a plausible-sounding post-hoc justification (itself just another autoregressive sequence) or acknowledges inability to explain. There is no internal reasoning process to describe because no such process occurred. The output emerged from statistical pattern matching, not deliberate logical inference. This limitation is fundamental rather than incidental — no scaling of model size or training data addresses it, because the architecture does not contain the mechanism for reasoning that would be required.

What AGI Actually Requires: Three Components Already Available

Having established what LLMs are and what they cannot do, Martin and Tarmo turn to what AGI would actually require. Their argument is counterintuitive but well-evidenced: all three required components already exist as mature, production-ready technologies. The barrier to AGI is not scientific — it is a matter of integrating existing pieces rather than inventing new ones.

The first component is hierarchical forward planning — the ability to reason about sequences of future actions across a decision tree, selecting action paths based on their expected outcomes. This is the mechanism behind chess engines and AlphaGo. The second component is knowledge graphs — structured representations of real-world entities, their properties, and the relationships between them. Knowledge graphs encode the facts that constrain what makes sense in the real world: cars have four wheels, humans have five fingers, a family has specific structural relationships. The third component is synthesis — the ability to combine findings from different search spaces and knowledge domains to generate genuinely new information, rather than reproducing existing information in different arrangements. As Martin frames it: “For AGI, it’s not about one hierarchical decision space, but starting to combine — scenario one, scenario two, scenario three — and we start to combine them. And through this synthesis, we are creating new information.”

Knowledge Graphs: The Missing Link That Causes Six Fingers

Tarmo introduces knowledge graphs as one of the most mature and under-utilised components in current AI systems. A knowledge graph is a structured database that encodes real-world entities and their relationships: “human hand — five fingers — each finger has following joints.” This structured knowledge gives any system that uses it a grounding in physical reality that pure pattern-matching from text lacks entirely.

Knowledge graphs have been in mass production for over a decade. Both Google and Bing use knowledge graph technology to power their search result enrichment features — the panels that appear when you search for a public figure, a landmark, or a well-known organisation. These panels draw on structured knowledge graphs containing millions of entities and their relationships. The technology is well-understood, well-deployed, and proven at scale.

Why AI-Generated Images Have Three Eyes

The absence of knowledge graphs in current LLM systems explains one of the most widely noticed failure modes in AI image generation: anatomical errors. Images produced by generative models frequently show hands with six fingers, faces with three eyes, or bodies with impossible proportions. These errors occur specifically because the models lack a structured knowledge graph encoding human anatomy. Without a representation that explicitly encodes “human hand has five fingers, arranged in a specific way with specific joints,” the model learns statistical patterns from training images but has no constraint that enforces anatomical correctness. As Tarmo explains: “LLMs don’t use knowledge graphs — this is why AI-generated images have three eyes or four fingers or six fingers. They don’t recognise it’s wrong because there is a missing link to real world. And since real world is what we have in knowledge graphs, we have all real world concepts, and how they are related to each other.” Research papers confirm this: LLM performance improves significantly when knowledge graph information is included in the answer generation process. This integration is rarely done in mainstream deployments.

Hierarchical Forward Planning: How Kasparov Thinks 12 Moves Ahead

The second AGI component — hierarchical forward planning — is illustrated through chess. Garry Kasparov, widely considered the greatest chess player in history, calculated an average of 12 moves ahead for each position he faced. This means he mentally traversed a decision tree of possible game states 12 levels deep, evaluating the outcomes of different move sequences and selecting the path most likely to lead to a winning position.

This is precisely what hierarchical forward planning means in computational terms: given a current state, enumerate possible actions, project the resulting states, evaluate them according to an objective function, and select the optimal action sequence. DeepMind’s AlphaGo applies this exact mechanism to the game of Go using Monte Carlo Tree Search — a proven algorithm for efficiently exploring large decision trees that would be computationally intractable to enumerate exhaustively. Martin explains the general principle: “You have a certain decision tree, you have a lot of options, you are making decisions, you have some search space, and in this search space, you are searching the best outcome. And you can explain it. You can explain why you chose the following move and not the other move.”

LLMs Have No Decision Trees

LLMs produce answers in a single forward pass without any search over a decision space. They do not evaluate alternative response paths, compare different approaches, or select based on expected outcome quality. The answer that emerges first is the answer that gets generated — there is no planning, no exploration of alternatives, and no optimisation toward an objective. Adding more parameters and more training data does not change this architecture. Hierarchical planning requires a fundamentally different computational structure from autoregression.

Level Two Thinking: Synthesis Across Search Spaces

The third and most distinctive AGI component is what Martin and Tarmo call level two thinking: the ability to combine findings from multiple different search spaces or knowledge domains to generate genuinely novel information. This is what distinguishes intelligence from mere optimisation.

Level one thinking, applied to chess, produces strong play by searching deeply within the established rules and patterns of chess. Level two thinking in chess would mean drawing on insights from a completely different domain — perhaps from mathematics, from military strategy, from game theory — and synthesising those external insights into novel chess strategies that no purely chess-focused search would have generated. As Martin explains: “Level two thinking, we have different scenarios, and then we start to combine these different scenarios. It’s not only that we have a big hierarchical decision space that we are traversing. But we have multiple search spaces — scenario one, scenario two, scenario three — and we start to combine them. We look at which gives the best option. And through this combining synthesis, we are creating new information.”

Why This Is the Definition of Creativity

This synthesising capacity is what humans mean when they speak of creativity, insight, or genuine intelligence. Newton’s synthesis of observational astronomy with differential calculus to produce gravitational theory. Einstein’s synthesis of electromagnetism with observations about light speed to produce special relativity. Darwin’s synthesis of geological time, observed species variation, and breeding selection to produce evolutionary theory. Each of these was a cross-domain synthesis that produced genuinely new information — information that no search within any single domain could have generated. LLMs do not synthesise across search spaces. They reproduce the most statistically probable continuation of existing text. The creative insights in their outputs come from the training data, not from synthesis. For how ChainAware’s actual AI architecture applies domain-specific predictive models rather than LLMs, see our Web3 AI agents guide.

Predictive AI That Actually Works in Web3

ChainAware Rug Pull Detector — Behavioral AI, Not Statistical Guessing

ChainAware uses domain-specific predictive models trained on blockchain behavioral patterns — not LLM autoregression. Predicts rug pulls before they happen by identifying behavioral signatures in pool dynamics and liquidity provider addresses. 95%+ accuracy. ETH, BNB, BASE. Free for individual checks.

AlphaGo as Proof: AGI Components Work When Combined

DeepMind’s AlphaGo, which defeated world Go champion Lee Sedol in 2016, demonstrated that the combination of hierarchical forward planning and synthesis produces genuine emergent intelligence rather than mere optimisation. The proof is in AlphaGo’s most famous moments: it regularly played moves that no human had ever played in the history of Go — moves that professional players initially described as mistakes but that turned out to be strategically optimal.

These novel moves were not reproduced from training data (they had never appeared in any historical game record). Rather, they emerged from the combination of deep tree search and Monte Carlo sampling that allowed the system to evaluate positions in ways that went beyond what any human-centric training corpus would have suggested. In Tarmo and Martin’s framework, AlphaGo was performing level two thinking within the constrained domain of Go: combining different search trees and synthesising genuinely new strategic information. As Martin notes: “AlphaGo, the DeepMind Alpha Go — it started creating new moves on the Go board. It started creating new moves that no one had played before. That’s intellect. Not repeating, like LLMs. LLMs repeat statistically the words that someone else has produced. In AGI, it creates genuinely new information.”

The Llama 8B Experiment: Small Model + Graph Beats Large LLM

Tarmo cites a specific research result that directly challenges the “scale is the answer” narrative dominating mainstream AI coverage. The experiment used Meta’s Llama 8B model — approximately 8 billion parameters, which is 100-1,000 times smaller than the largest commercial LLMs — and combined it with graph-based optimisation techniques. On mathematical reasoning benchmarks, this small hybrid system outperformed much larger standalone LLMs including models from OpenAI and Google.

The implication is significant: if a model 1,000 times smaller achieves better results by integrating complementary algorithms (graph optimisation, structured reasoning), then the problem with current AI is not insufficient scale — it is insufficient architecture. Adding more GPU compute and more training data to the same LLM architecture does not add the reasoning capability that graph optimisation introduces. As Tarmo argues: “You don’t need this huge compute power to build AGI. If you think that your only solution is to scale up, then maybe your algorithm is wrong.” For context on how similar principles apply to ChainAware’s predictive models, see our predictive AI for Web3 guide.

Who Benefits from the LLM Narrative: Corporations, Not AGI

If the all-in-on-LLMs approach is technically wrong — if bigger models with more compute will not produce AGI — then why does the entire industry continue pushing it? Martin and Tarmo offer a clear economic explanation that follows the money rather than the technical merits.

The primary beneficiaries of the current LLM investment wave are Nvidia (GPU hardware), Microsoft (OpenAI investment + Azure compute), and large corporations that can use LLMs to automate their back-office operations. LLMs are genuinely useful for corporations: they can summarise documents, draft routine correspondence, extract data from forms, generate code templates, and handle repetitive customer service queries. All of these are level-one-thinking tasks — pattern-following applications that don’t require reasoning. As Tarmo explains: “LLMs will have a massive benefit for back offices, back office automatisation. But let’s look at the real economy — we have 20% corporations and 80% are small-medium enterprises.” Corporations, despite representing only 20% of economic activity, drive the vast majority of AI investment because they are the entities with budgets large enough to pay for enterprise LLM deployments and the back-office scale that makes automation financially significant.

The Profit Margin Mechanism

For corporations, LLMs provide a mechanism to increase profit margins without product innovation. By replacing back-office workers with LLM automation, a corporation reduces its labour cost base while maintaining its existing product and service offering. Revenue stays the same; costs fall; margins expand. This is operationalisation — a legitimate form of efficiency improvement — but it is explicitly not the product or service innovation that defines genuine value creation. As Martin notes: “Without innovating new products, they can increase the profit margin. And if you go over to AGI or level two thinking, then you start doing innovation — creating something new.” The corporate interest in LLMs is therefore real and rational, but it is orthogonal to building AGI.

The SME Problem: 80% of the Economy That LLMs Cannot Help

Small and medium enterprises represent approximately 80% of global economic activity by employment and value creation. They are also the part of the economy where LLMs provide the least benefit — and where genuine AGI would provide the most transformative value.

SMEs operate primarily through personalised client interaction and creative problem-solving. A plumber diagnosing why a heating system produces unusual noises, a graphic designer interpreting an ambiguous brief and producing a creative solution, a management consultant analysing a unique business problem — these are all level two thinking tasks. They require understanding the specific situation, bringing relevant knowledge from multiple domains, generating novel solutions tailored to the particular context, and explaining the reasoning behind those solutions. None of these tasks can be automated by pattern-matching over training data.

Why Corporations Become Operationally Inflexible

Tarmo makes an important observation about why corporations are so dependent on level one thinking operationalisation while SMEs require level two: corporations succeed precisely by standardising and scaling processes that were once creative SME innovations. A startup develops a novel product, refines it through creative iteration, and then — if successful — scales it by systematising everything into repeatable procedures. At that point, the corporation effectively becomes a machine for executing documented procedures, and level two thinking is actively discouraged (it would disrupt the standardised procedures). SMEs, because they cannot afford the fixed cost of this systematisation, must solve each client problem freshly. This is why AGI — which would amplify level two thinking — would benefit SMEs far more than corporations. But precisely because SMEs have smaller investment budgets, their needs do not drive the AI investment narrative. For more on how Web3 projects (which operate more like innovative SMEs) can use real predictive AI, see our guide to why AI agents will accelerate Web3.

AGI on Blockchain: Why It Is Impossible

Having established what AGI requires and why LLMs don’t provide it, Martin and Tarmo turn to the specific Web3 dimension of the discussion: the projects claiming to build AGI on the blockchain. The assessment is unambiguous.

AGI requires intensive computation — deep hierarchical search trees, large-scale knowledge graph traversal, and iterative synthesis across multiple search spaces. Blockchain networks are specifically designed for decentralised consensus — a completely different computational problem. Running complex AI inference on a blockchain is not just technically challenging; it contradicts the fundamental architecture of both systems. Blockchain’s value lies in trustless state persistence and verifiable computation at modest scale. AGI computation requires enormous, flexible compute resources at minimal marginal cost.

The Real Use Case: Predictive AI on Blockchain Data

The genuine AI opportunity in Web3 is predictive AI using blockchain data — the use case ChainAware has been developing since 2021. Blockchain data, particularly on proof-of-work or high-gas-cost chains, provides extremely high-quality behavioral signals: deliberate financial decisions recorded permanently and publicly. Domain-specific predictive models trained on this data achieve remarkable accuracy at specific prediction tasks. As Martin explains: “The web three data — because it’s a proof of work data, meaning that the transaction cost has a very high predictability power — it gives you very high accuracy in your prediction algorithms.” ChainAware’s fraud detection (98% accuracy), rug pull prediction, credit scoring, and user intention calculation are all examples of this real AI use case. For the complete picture, see our Web3 AI agents overview and our ChainAware AI roadmap.

Real AI in Web3: Predictive Models, Not LLM Wrappers

Martin observes that the CoinGecko AI project list grew from approximately 20 genuine AI projects in 2021 to approximately 200 projects. The problem is that a significant portion of the growth came from ChatGPT interface wrappers — projects that place a user interface in front of an OpenAI API call and present this as a Web3 AI product. This adds no intelligence, no blockchain-specific capability, and no genuine technical innovation.

Tarmo references Vitalik Buterin’s essay on AI and crypto, noting that Buterin — while framing it diplomatically — effectively arrives at the same conclusion: the genuine AI use case in blockchain is predictive AI, not generative AI. As Martin notes: “Okay, he’s packaging it very nicely, politically correctly. But what he’s saying de facto is predictive AI — that’s in the blockchain. Generative AI has no space on the blockchain.” The projects worth attention in the Web3 AI space are those building domain-specific predictive models using blockchain data — not those building interfaces for general-purpose LLMs that happen to be deployed on a blockchain.

Specialized AGIs: The Actual Future of Artificial Intelligence

Martin and Tarmo reject the “singularity” framing of AGI — the idea that there will be one overwhelming superintelligence that knows everything and can do everything. Instead, they predict the emergence of specialised AGIs: domain-specific intelligent systems that achieve genuine level-two thinking within specific knowledge areas. This prediction follows directly from the knowledge graph component of the AGI framework.

Knowledge graphs are necessarily domain-specific — you cannot build one knowledge graph that captures all human knowledge with the precision required for reasoning. AlphaGo is a specialised AGI for Go; a specialised medical diagnosis AGI would need a deep knowledge graph of medical conditions, symptoms, treatments, and their relationships. A legal reasoning AGI would need a knowledge graph of legal precedents, statutes, and their interpretations. Each domain’s knowledge graph is a distinct body of structured information requiring specific expert construction. As Martin explains: “There will be not one AGI — there will be multiple AGIs. Because the knowledge that humans know alone is so big. And there will be not this everything-overwhelming AGI. We are getting a lot of specialized AGIs which are then synthesising new information, helping you to think, to synthesise new information in their respective domains.”

The Disruption Pattern: The Dow Jones Since 1930

Martin introduces the Dow Jones Industrial Average as evidence of how technological innovation disrupts incumbents — and as a prediction for what specialised AGIs will do to today’s dominant corporations. The DJIA was established in the early twentieth century. Of the companies originally included in the index, only General Electric survived as a significant entity into the present day (and GE itself has restructured dramatically). Every other original component was acquired, went bankrupt, or became irrelevant as new technologies with lower unit costs displaced their business models. Tarmo frames the implication for AGI: “If you have this massive wave of innovation, there will be specialised level two AGIs doing new things, creating something new. The impact will be the same on these corporations as we have seen from 1930 till today — the same that the power balance will change.” Corporations that cannot adapt to the innovation wave from domain-specific AGIs will face displacement — not from the corporations themselves losing jobs, but from entirely new competitors whose AGI-enabled capabilities make existing business models obsolete. For more on the intersection of innovation and the Web3 economy, see our crossing the chasm guide.

The DeFi Narrative Parallel: When VCs Push the Wrong Path

Tarmo closes the substantive discussion with an observation that connects the LLM narrative to ChainAware’s direct experience with a previous VC-driven narrative: the DeFi boom of 2020-2022. The parallel is uncomfortable but precise.

In DeFi, venture capitalists collectively pushed a narrative — peer-to-pool lending with variable rates — that had fundamental regulatory and structural problems. The pooling of assets triggered securities regulations in most jurisdictions, requiring licences that most DeFi projects lacked. The variable rate structure was genuinely inferior to fixed-rate products for most retail users. SmartCredit.io identified these problems from the beginning and built around them. The VC-funded projects that built on the flawed narrative raised tens of millions of dollars and then shut down when the regulatory and market realities became unavoidable. As Tarmo notes: “Most of DeFi companies are regulatory non-compliant — as soon as you have pooling, it is over. You need a licence in every country. This was pushed by venture capitalists, pushed all the DeFi industry into a kind of stopping road.”

The Same Pattern Now in LLMs

Tarmo sees the identical pattern emerging with LLMs: venture capitalists and large technology incumbents are collectively pushing a narrative — scale LLMs to build AGI — that has fundamental technical problems. The investments flow; the GPU orders multiply; the compute centres grow. Meanwhile, the actual components needed for AGI (knowledge graphs, hierarchical planning, synthesis mechanisms) are being developed in relative obscurity with far less investment. The outcome, Tarmo predicts, will be similar to DeFi: a period of massive investment in the wrong direction, followed by a recalibration when the technical limitations become undeniable. For the economic analysis of what genuine AI-driven innovation means for Web3 specifically, see our AI agents acceleration guide.

Comparison Tables

LLMs vs AGI: A Technical and Capability Comparison

| Property | Large Language Models (LLMs) | Artificial General Intelligence (AGI) |

|---|---|---|

| Core mechanism | Autoregression — predict most probable next word | Hierarchical search + knowledge graphs + synthesis |

| Thinking level | Level one — pattern response, no explanation | Level two — deliberate reasoning, explainable decisions |

| Can explain its answers? | No — no internal reasoning process to describe | Yes — decision tree traversal is inspectable |

| Knowledge of real world | Statistical patterns from text — no structured reality | Knowledge graphs — explicit real-world constraints |

| Novel information creation | No — reproduces statistical patterns from training data | Yes — synthesis across search spaces generates new ideas |

| AlphaGo analogy | Cannot play novel chess moves — only statistical moves | Creates moves never played before (level 2 synthesis) |

| Alice puzzle result | Fails — answers two instead of three | Solves correctly by modelling family structure |

| Scale solves limitations? | No — wrong algorithm, not insufficient scale | Scale helps but architecture change is required |

| Who primarily benefits | Corporations with back-office automation needs | SMEs and innovation-driven organisations |

| Blockchain compatibility | No special advantage; simple API wrapper | Impossible to run on blockchain architecture |

Predictive AI (ChainAware) vs LLM-Based Web3 Projects

| Property | LLM-Based Web3 Projects | Predictive AI (ChainAware) |

|---|---|---|

| AI mechanism | Autoregressive text generation | Domain-specific behavioral prediction models |

| Data source | General public text (not blockchain-specific) | On-chain financial transaction history |

| Output type | Text — cannot explain reasoning | Probability score with behavioral pattern basis |

| Accuracy measurement | Not measurable — no ground truth per output | 98% backtested against CryptoScamDB |

| Blockchain relevance | None — same LLM works off-chain identically | Specific to blockchain behavioral data |

| Real use cases | Chatbots, content generation (not Web3-specific) | Fraud detection, rug pull prediction, user intentions |

| Hallucination risk | High — autoregression produces false outputs | Low — specific models trained on verifiable data |

| Investment required | Enormous — billions for compute infrastructure | Domain expertise + blockchain data access (free) |

| Tarmo’s assessment | “Gambling” — no product, narrative only | “Beautiful AI algorithms on blockchain” |

Frequently Asked Questions

Why can’t LLMs solve the Alice’s brothers puzzle?

Solving the puzzle requires building a mental model of the family structure and reasoning about Alice’s role within it — she is simultaneously a sibling who has sisters and a sister to her brothers. LLMs produce answers through statistical pattern matching over text: they identify that the prompt is about Alice’s family and generate the most probable response pattern from training data, which typically associates “Alice’s sisters” questions with the number two. No reasoning about family structure occurs. This failure reveals that LLMs lack the capacity for logical reasoning — a fundamental limitation that additional scale does not address.

What are the three components needed for AGI, and are they available?

The three components are: (1) hierarchical forward planning — the ability to search decision trees and evaluate multi-step action sequences, as demonstrated by chess engines and AlphaGo’s Monte Carlo Tree Search; (2) knowledge graphs — structured representations of real-world entities and relationships, in mass production for 10+ years in Google and Bing search; (3) synthesis — combining findings across different search spaces to generate novel information, as demonstrated by AlphaGo’s creation of unprecedented Go moves. All three exist as mature technologies. The barrier to AGI is integrating them, not developing them from scratch. The Llama 8B + graph optimisation experiment shows that integration already outperforms much larger standalone LLMs on specific tasks. See AlphaGo’s Wikipedia page for the technical background.

Why is AGI impossible on a blockchain?

Blockchain networks are architecturally designed for decentralised consensus — verifying that a distributed set of nodes agrees on a shared state. This requires that all computation be deterministic, verifiable by every node, and recorded permanently. AGI computation — deep hierarchical search, knowledge graph traversal, iterative synthesis — requires enormous, flexible computational resources with minimal marginal cost. Running these computations on a blockchain would be prohibitively expensive, extremely slow, and structurally incompatible with how blockchain consensus works. Predictive AI using blockchain data (running models off-chain that read on-chain data) is feasible and highly effective, as ChainAware demonstrates. “AGI on blockchain” is a narrative without technical foundation.

Why do LLM-generated images have too many fingers?

Because LLMs and image generation models lack knowledge graphs. A knowledge graph of human anatomy explicitly encodes that human hands have exactly five fingers with specific structures and proportions. Without this structured real-world constraint, image generators learn statistical patterns about what hands look like in training images. These statistical patterns produce generally hand-shaped outputs but cannot enforce the precise anatomical constraint that every human hand has exactly five fingers in a specific arrangement. Adding knowledge graphs to image generation models would largely eliminate this class of errors — and research papers confirm that knowledge graph integration significantly improves AI performance on tasks requiring real-world knowledge.

What is the real AI use case in Web3?

The real AI use case in Web3 is domain-specific predictive AI using blockchain behavioral data. Financial transactions on blockchain — especially on high-gas-cost chains like Ethereum — are deliberate decisions that leave behavioral traces of extremely high signal quality. Training predictive models on these traces enables accurate prediction of future behavioral patterns: whether a wallet is likely to commit fraud (98% accuracy), whether a pool will rug pull, what a wallet owner’s likely next financial actions are, and what their credit risk profile is. This is what ChainAware has built and what Vitalik Buterin’s essay on AI and crypto implicitly endorses. LLM wrappers and “AGI on blockchain” projects are not genuine Web3 AI use cases. For the complete analysis, see our AI roadmap.

Real Predictive AI for Web3 — Not LLM Narratives

ChainAware Prediction MCP — Fraud, Rug Pull, Intentions. One API.

Domain-specific predictive models trained on blockchain behavioral data. 98% fraud detection accuracy. Rug pull prediction before it happens. User intention calculation. Credit scoring. Not autoregression — actual predictive AI with verifiable accuracy. 31 MIT-licensed agents. 8 blockchains.

This article is based on X Space #13 hosted by ChainAware.ai co-founders Martin and Tarmo. Watch the full recording on YouTube ↗ · Listen on X ↗. For questions or integration support, visit chainaware.ai.