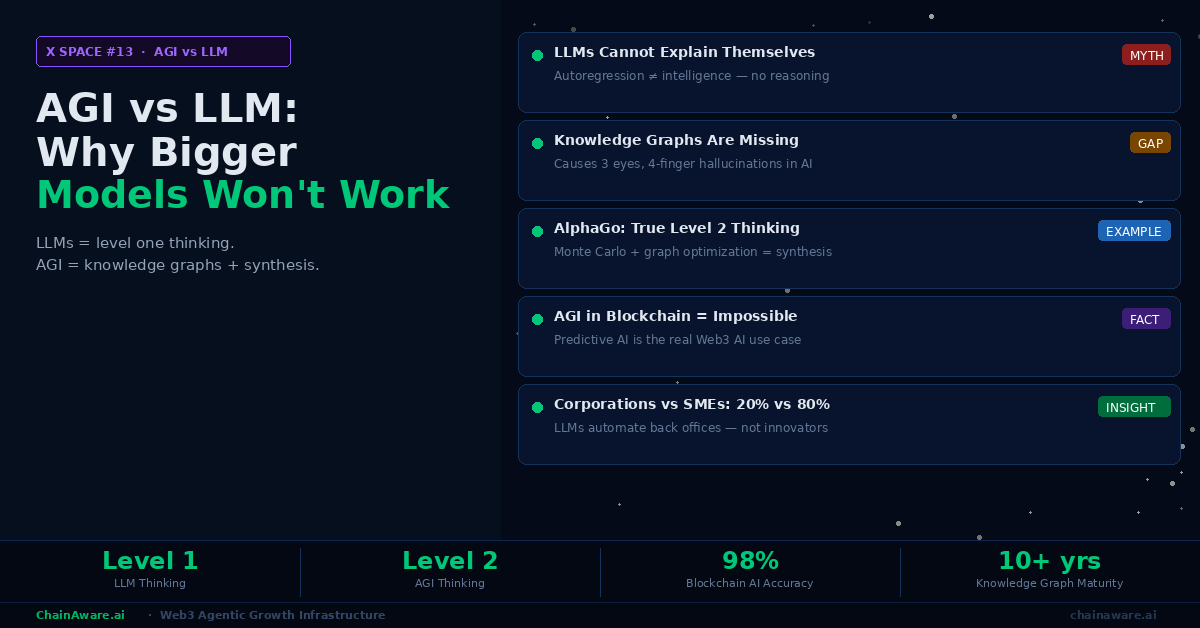

AGI vs LLM: Why Bigger Models Won’t Get Us to Artificial General Intelligence

X Space: AGI vs LLM — Why Bigger Models Won’t Get Us to Artificial General Intelligence. ChainAware co-founders Martin and Tarmo. Core thesis: AGI (Artificial General Intelligence) does not exist and scaling LLMs will not produce it — Web3 founders and investors must understand this distinction to evaluate which AI projects have real utility vs narrative. Key distinctions: AGI = AI with human-level reasoning across all domains (does not yet exist); LLM = large language model trained on text prediction (statistical autocomplete, not reasoning); narrow predictive AI = purpose-built models for specific classification tasks (fraud detection, behavioral prediction); LLMs hallucinate on numerical on-chain data, cannot make deterministic fraud classifications, run at 1-5 second latency (100x too slow for real-time); real competitive advantage in Web3 AI requires: proprietary training data, domain-specific model architecture, iterative accuracy improvement; the diagnostic question for any Web3 AI project: what specifically does it predict? If it cannot answer with a metric (98% accuracy, sub-second response) it is narrative AI not utility AI. ChainAware uses narrow predictive AI: ML models trained on 14M+ on-chain wallet behavioral histories, 98% fraud prediction accuracy, real-time response, 8 blockchains. Not ChatGPT. Not a wrapper. Proprietary. Prediction MCP · 32 open-source agents · chainaware.ai